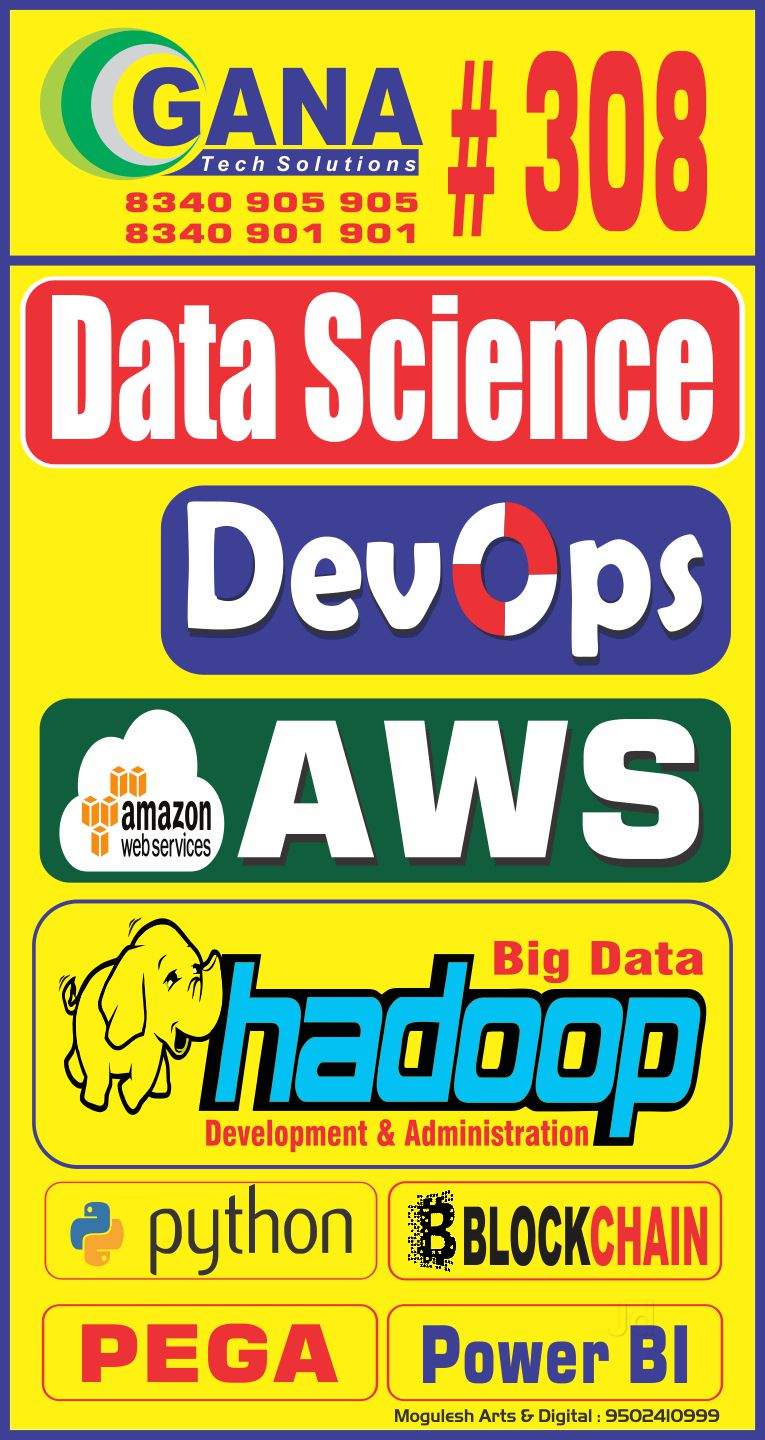

Hadoop training in Hyderabad

HADOOP

Big Data Hadoop Training Hyderabad is the latest buzzword that is evolving in this IT world.

As the technology has been processed on an immense scale and creates the need for more IT employees to take equal measures with technical progression.

In this circumstance, Big Data Analytics deserves a boast which can lead to better job opportunities in the IT field today.

ABOUT

Hadoop is an open-source software framework that supports data-intensive distributed applications licensed under the Apache v2 license.

The Hadoop framework transparently provides both reliability and data motion to applications.

In addition, it provides a distributed file system that stores data on the compute nodes, providing very high aggregate bandwidth across the cluster.

It enables applications to work with thousands of computation-independent computers and petabytes of data.

Hadoop Training Hyderabad is in the Java programming language and is an Apache top-level project being built and used by a global community of contributors.

Hadoop and its related projects (Hive, HBase, Zookeeper, and so on) have many contributors from across the ecosystem.

WHY IS HADOOP IMPORTANT?

1-Ability to store and process huge amounts of any kind of data, quickly.

2-With data volumes and varieties constantly increasing, especially from social media and the Internet of Things (IoT), that's a key consideration.

3-Computing power. Hadoop's distributed computing model processes big data fast.

4-The more computing nodes you use, the more processing power you have.

5-Fault Tolerance. Data and application processing are protected against hardware failure.

6-Low cost. The open-source framework is free and uses commodity hardware to store large quantities of data.

7-Scalability. You can easily grow your system to handle more data simply by adding nodes. Little administration is required.